It’s all double Dutch to ChatGPT

Language is power. Whoever decides which words count, which sentences sound right, which expressions are ‘correct’, also decides who belongs and who doesn’t. That question is as old as standardised Dutch itself. But as generative AI reshapes the linguistic landscape, it takes on a new and urgent dimension. Because if ChatGPT and similar systems are increasingly defining what good Dutch looks like, who made sure those systems actually know what Dutch is?

AI AI AI. Everywhere you go online, it’s about AI. The term is so general that it is becoming almost meaningless. It’s comparable to a term such as “animal”: an umbrella for a vast range of species. Personally, I find generative AI the most interesting category. These are systems and tools that are programmed to create new things when prompted, using databases of videos, music, or text. What they can do is incredibly impressive. So it’s no surprise that many people use them every day without a second thought.

The most important question is: what kind of Dutch is actually in these models?

That is the subject of debate. Asking ChatGPT a question is just as irresponsible as buying fast fashion, eating factory-farmed chicken, or taking (short) flights. As the American computational linguist Emily Bender writes in her compelling book The AI Con: AI almost always relies on large-scale data theft, leads to serious privacy breaches, consumes vast amounts of energy, and secretly exploits workers in underdeveloped countries.

Generative AI also presents a number of linguistic challenges. The most important question is: what kind of Dutch is actually in these models? What is certain, is that there is a lot of it: millions of websites and documents, billions of words. It’s not easy to obtain such vast amounts of data. The companies behind these chatbots show little regard for property rights or the law. They take whatever they can find, whether it’s a massive database of illegally collected data or a website for far-right conspiracy theorists.

Dutch is an official language in six countries.

Dutch is an official language in six countries.© Taalunie

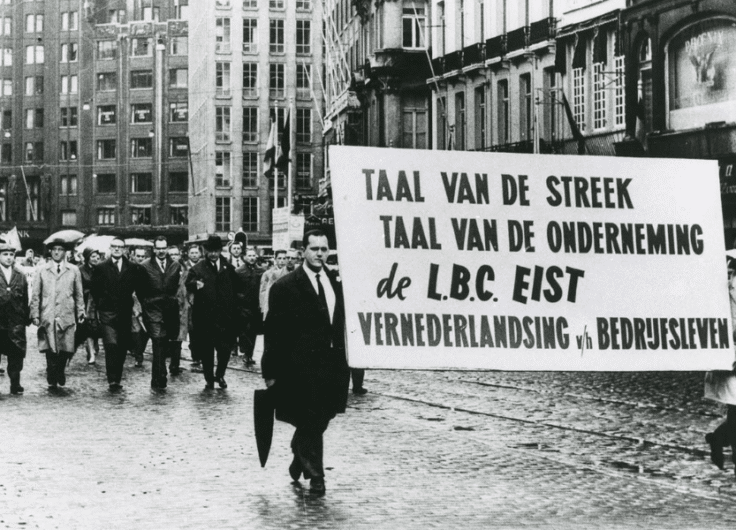

There is yet another problem. Language that exists in digital form is most likely to be included in these datasets. However, digital representation is often highly uneven across different varieties of a language. Most of the Dutch available online is Netherlands Dutch, while Belgian Dutch and Surinamese Dutch are much less represented. Even less data is available for dialects and other varieties. This uneven distribution can be seen in the top twenty largest websites used to train Dutch-language chatbots: eighteen have the .nl domain, only two use .be, and the .sr domain for Suriname is entirely missing. While Netherlands Dutch has more speakers than Surinamese Dutch, all varieties are just as valuable and should be represented.

Why does this matter? It’s about the content chatbots generate. Simply put: the input determines the output. The overrepresentation of Netherlands Dutch therefore leads to a loss of linguistic variation. In doing so, ChatGPT unexpectedly helps advance Dutch standardisation, finally fulfilling the old integrationist dream.

In the midst of all the hullabaloo about the future of Dutch, the real question is not whether the language will survive, but which variety will. And: who decides what Dutch of the future will look like? These questions are not new:the debate around “whose Dutch” is one of the most fundamental in our language. Dutch has traditionally been the language of particular privileged groups, not of the general population. Today’s standard Dutch is derived from the language of the sixteenth-century elite. The concept of the “native speaker” is still considered sacrosanct in language education and linguistics. At the same time, there is, of course, much more Dutch that is equally valuable and should be recognised as part of the language.

In the midst of all the hullabaloo about the future of Dutch, the real question is not whether the language will survive, but which variety will. And: who decides what Dutch of the future will look like?

The Corpus of Contemporary Dutch, a large collection of written texts, is a good example of how hidden biases can slip into our language sources. After all, does this corpus really represent contemporary Dutch? Variants of Dutch from the Netherlands, Belgium, Suriname, and even the Caribbean are included, yet the corpus falls short in genre diversity. More than ninety-eight percent of the corpus consists of newspaper articles. This leads to exactly the same problems as with chatbots. The language found in newspapers is only a small segment of the language, but is a part that is relatively easy to collect digitally.

Generative AI brings back the question of which form of Dutch we want. There is once again a limitation of what is considered Dutch, with the language of certain groups set as the standard. Fortunately, we also have a huge opportunity to make Dutch more inclusive.

If we really want new language models such as GPT-NL to represent Dutch accurately, we must train them on data covering all varieties of Dutch. This includes data from writers and speakers across the entire Dutch-speaking region, from Suriname to West Flanders, from Groningen to Curaçao, including both native speakers and second-language learners. The more diverse the data, the better, for both societies and the language.

Leave a Reply

You must be logged in to post a comment.